Only the following Databricks Runtime versions are supported: The Databricks SQL Connector for Python submits SQL queries directly to remote compute resources and fetches results. This can make it especially difficult to debug runtime errors. Also, Databricks Connect parses and plans jobs runs on your local machine, while jobs run on remote compute resources. the Databricks SQL Connector for Python is easier to set up than Databricks Connect. Because the client application is decoupled from the cluster, it is unaffected by cluster restarts or upgrades, which would normally cause you to lose all the variables, RDDs, and DataFrame objects defined in a notebook.įor Python development with SQL queries, Databricks recommends that you use the Databricks SQL Connector for Python instead of Databricks Connect. Shut down idle clusters without losing work.

You do not need to restart the cluster after changing Python or Java library dependencies in Databricks Connect, because each client session is isolated from each other in the cluster.

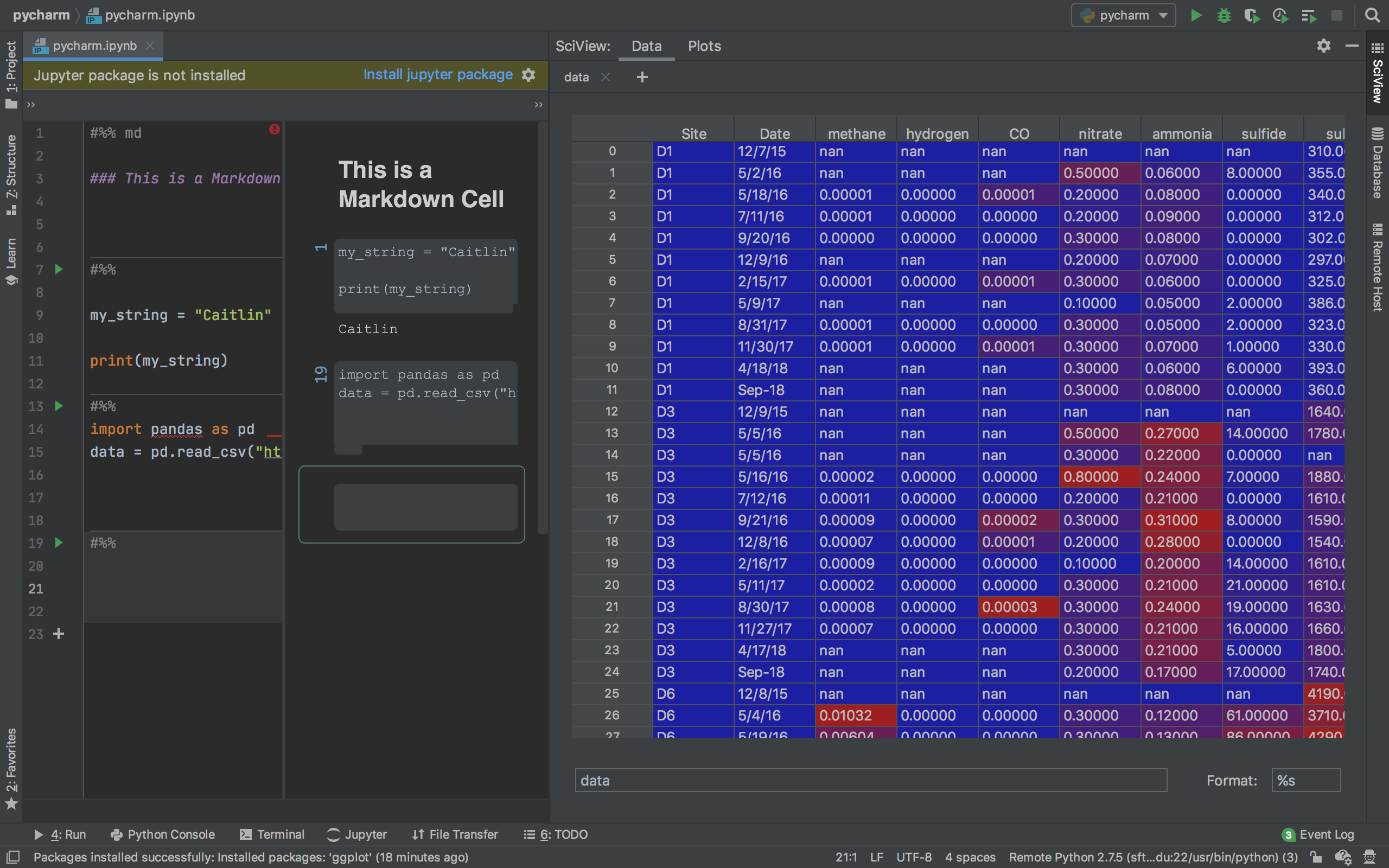

#JUPYTER NOTEBOOK PYCHARM INSTALL#

Anywhere you can import pyspark, import, or require(SparkR), you can now run Spark jobs directly from your application, without needing to install any IDE plugins or use Spark submission scripts. Run large-scale Spark jobs from any Python, Java, Scala, or R application.Then, the logical representation of the job is sent to the Spark server running in Azure Databricks for execution in the cluster. It allows you to write jobs using Spark APIs and run them remotely on an Azure Databricks cluster instead of in the local Spark session.įor example, when you run the DataFrame command (.).groupBy(.).agg(.).show() using Databricks Connect, the parsing and planning of the job runs on your local machine. Overviewĭatabricks Connect is a client library for Databricks Runtime.

#JUPYTER NOTEBOOK PYCHARM HOW TO#

This article explains how Databricks Connect works, walks you through the steps to get started with Databricks Connect, explains how to troubleshoot issues that may arise when using Databricks Connect, and differences between running using Databricks Connect versus running in an Azure Databricks notebook. Databricks Connect allows you to connect your favorite IDE (Eclipse, IntelliJ, P圜harm, RStudio, Visual Studio Code), notebook server (Jupyter Notebook, Zeppelin), and other custom applications to Azure Databricks clusters.